How Image to Video AI Works: From Still Images to Motion

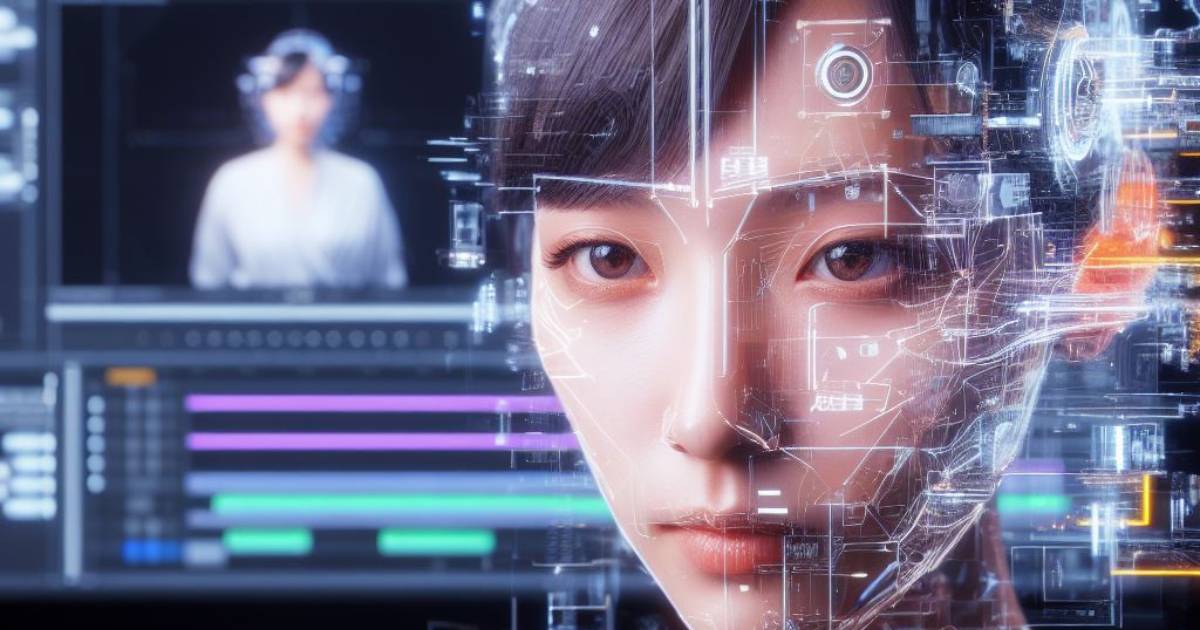

The ability to transform static visuals into dynamic motion has become one of the most influential shifts in modern digital production. Today, image to video ai technologies allow creators, studios, and publishers to convert still images into animated video content faster than ever before. What once required full animation pipelines can now begin with a single image and a carefully written prompt.

For game studios, marketing teams, and content-driven businesses, this shift opens new possibilities — but also introduces new challenges. While AI tools dramatically accelerate production, the quality of results depends heavily on process understanding, prompt structure, and integration into professional pipelines. Image-to-video AI is not a magic button; it is a tool that requires direction, intent, and creative oversight to deliver production-ready results.

What Is Image to Video AI and How It Works

From Static Frames to Motion

Image to video AI is built around the idea of synthesizing believable motion from static visual input. Instead of animating objects frame by frame, the system analyzes a single image (or a small set of images) and predicts how visual elements could evolve over time. This prediction is based on patterns learned from large-scale video datasets rather than explicit animation rules.

At the core of this process is motion inference. The AI evaluates shapes, edges, lighting, and composition to determine what parts of the image are likely to move and how. For example, characters may exhibit subtle body motion, environments may shift with simulated camera movement, and background elements may respond with parallax effects. The generated motion is probabilistic, meaning the AI selects the most visually plausible outcome rather than following deterministic animation logic.

This approach fundamentally differs from traditional animation. There are no keyframes, skeletons, or animation curves defined by an artist. Instead, motion emerges from statistical understanding of how similar images behave in video form. This is why clear intent and guidance are so important when working with image to video AI systems.

The Role of Depth, Layers, and Perspective

Modern image to video AI systems attempt to reconstruct a sense of three-dimensional space from a two-dimensional image. They do this by inferring depth cues such as perspective lines, occlusion, scale differences, and lighting gradients. Based on these cues, the AI separates the image into implied layers: foreground, midground, and background.

Once these layers are inferred, the system can simulate camera movement rather than simple pixel distortion. This results in parallax motion, where foreground elements move faster than background elements, creating a more cinematic and realistic effect. Proper depth interpretation is one of the key factors that differentiates high-quality AI-generated video from unstable or artificial-looking results.

Perspective also plays a critical role. Images with clear vanishing points, strong composition, and well-defined spatial relationships give the AI more information to work with. Conversely, flat compositions or visually cluttered images make depth inference difficult, often leading to warping or unnatural motion. Understanding how AI interprets depth allows creators to prepare images that guide the system toward more controlled and believable outcomes.

Why Image Quality Matters

Image to video AI is highly sensitive to input quality. Because the system extrapolates motion from visual cues, any ambiguity or noise in the source image is amplified during generation. Low resolution, compression artifacts, inconsistent lighting, or unclear subject separation can all lead to unstable or distorted video output.

High-quality images provide the AI with clear structural information. Sharp edges help define object boundaries, balanced lighting supports depth estimation, and intentional composition clarifies what should remain stable versus what can move. This is especially important for faces, characters, and detailed environments, where small errors become immediately noticeable once motion is introduced.

In professional workflows, images are often optimized specifically for AI video generation. This may include cleaning up backgrounds, enhancing contrast, simplifying overly complex areas, or adjusting color balance. Treating image preparation as a foundational step rather than an afterthought significantly increases the likelihood of producing usable, production-ready video content.

The Typical Image to Video AI Workflow

Step One: Image Preparation

The image preparation stage is the foundation of any successful image to video AI workflow. Although many tools allow users to upload raw images directly, professional results depend on deliberate visual refinement before generation begins. At this stage, the goal is to reduce ambiguity and guide the AI toward a clear interpretation of the scene.

Image preparation typically involves adjusting contrast, correcting color balance, and ensuring consistent lighting across the frame. Visual noise, compression artifacts, and unnecessary background elements are minimized to prevent misinterpretation during motion synthesis. When the subject is a character or product, clean separation from the background helps the AI maintain stable forms during animation.

Composition also plays an important role. Images with strong framing, clear focal points, and intentional depth cues tend to produce more predictable motion. In professional pipelines, images are sometimes modified or even created specifically for AI video generation, ensuring they contain the visual signals needed for controlled movement.

Step Two: Defining Motion Intent

Once the image is prepared, the next step is defining how the static visual should behave over time. This phase replaces traditional animation planning and functions more like a creative direction brief. Instead of specifying keyframes, creators describe motion characteristics using prompts, parameters, or preset controls.

Motion intent includes decisions about camera behavior, subject movement, environmental dynamics, and overall pacing. A scene might call for a slow forward camera push, subtle environmental motion, or gentle character animation. Clear intent reduces randomness and helps the AI generate motion that aligns with creative goals.

Professional teams treat this step with the same care as storyboarding or animation planning. Ambiguous or conflicting instructions often lead to unstable results, while focused, intentional direction produces smoother, more coherent video output. This is where understanding how AI interprets language becomes a major advantage.

Step Three: Generation and Iteration

Image to video AI generation is inherently iterative. Rarely does the first output meet production standards without refinement. Instead, teams generate multiple variations, adjusting prompts, motion strength, duration, or stylistic constraints between iterations.

Effective iteration is not trial and error in a random sense. Experienced users analyze each result, identify specific issues, and make targeted adjustments. For example, if motion feels too aggressive, intensity may be reduced; if depth feels flat, camera movement may be refined.

Iteration also helps establish consistency across multiple shots or assets. By documenting successful prompt structures and settings, teams can reproduce results more reliably. This systematic approach transforms AI generation from experimentation into a repeatable production process.

Popular Types of Image to Video AI Services

Text-Guided Image Animation Tools

Text-guided image animation tools rely primarily on natural language prompts to define how a static image should transform into motion. Users describe camera behavior, subject movement, atmosphere, and pacing, and the AI interprets these instructions to generate a video sequence. This approach offers a high degree of creative freedom, but also places more responsibility on prompt quality.

These tools are especially popular for concept visualization, mood videos, and early-stage creative exploration. Because motion is driven by descriptive language rather than fixed presets, results can vary significantly between generations. This variability makes text-guided systems powerful for experimentation but less predictable for production without experience.

In professional workflows, text-guided tools are often used in the ideation phase. Teams explore different motion directions quickly, identify promising results, and then refine prompts iteratively. When handled with discipline, these tools can produce visually compelling sequences that would otherwise require extensive manual animation.

Depth-Based and Camera Motion Tools

Depth-based image to video AI services focus on simulating camera movement rather than altering the subject itself. These systems analyze the image to infer depth layers and then animate the scene using pans, tilts, zooms, or dolly movements. The result is a cinematic effect that brings still images to life without distorting core elements.

This category is particularly effective for environments, landscapes, architectural visuals, and game backgrounds. Because the subject remains largely static, the risk of deformation is lower, and results tend to be more stable. Subtle motion adds visual interest while preserving the integrity of the original artwork.

Depth-based tools are frequently used in marketing, trailers, and presentation content, where controlled motion is preferred over dramatic animation. They integrate well into professional pipelines because they offer consistency and repeatability, especially when multiple images need to be animated in the same style.

Hybrid AI Video Platforms

Hybrid image to video AI platforms combine multiple input methods into a single system. These tools may support image input, text prompts, motion presets, style constraints, and parameter controls simultaneously. This hybrid approach offers a balance between creative flexibility and production stability.

Such platforms are better suited for commercial and professional use, where consistency across multiple outputs is critical. By combining preset-based controls with prompt-driven customization, hybrid systems reduce unpredictability while still allowing creative direction. This makes them ideal for campaigns, series-based content, and game-related video assets.

In professional environments, hybrid platforms are often integrated into broader content pipelines. Teams develop internal guidelines for prompt structure, parameter ranges, and visual standards, turning AI video generation into a repeatable process rather than an experimental one. This structured use is what enables image to video AI to scale beyond one-off visuals into ongoing production.

How to Write Effective Prompts for Image to Video AI

Be Specific About Motion, Not Just Style

One of the most common misconceptions when working with image to video AI is the belief that visual style alone determines the quality of the result. While stylistic cues are important, motion description is the primary driver of how the final video behaves. AI systems respond most reliably when prompts clearly define what should move, how it should move, and what should remain stable throughout the sequence.

Effective prompts focus on camera behavior, subject dynamics, and environmental response. Instead of using abstract descriptors such as “cinematic” or “dramatic,” professional prompts describe concrete actions like slow camera push-ins, subtle background parallax, or gentle subject breathing motion. These details give the AI a framework for generating motion that feels intentional rather than random.

In professional workflows, prompts are often written with the mindset of an animation director rather than a stylist. The goal is to guide motion in a controlled way, ensuring that the AI enhances the image rather than overpowering it. This approach dramatically improves output consistency and reduces the number of unusable generations.

Describe Timing and Intensity

Beyond defining motion type, successful prompts also communicate pacing and intensity. Image to video AI systems interpret descriptive terms related to speed, smoothness, and motion strength, which directly affect visual stability. Without these cues, the AI may default to exaggerated or uneven movement that feels artificial.

Professional prompt writers think in terms of temporal flow. They describe whether motion should feel slow and continuous, gently looping, or deliberately restrained. Words that suggest moderation and control help the AI avoid abrupt transitions or jittery motion, especially in character or environment-focused visuals.

Intensity control is particularly important when generating longer sequences. Subtle motion sustained over time feels more natural than aggressive movement compressed into a short duration. By clearly defining both timing and intensity, prompts help the AI maintain coherence across the entire clip instead of peaking too early or collapsing into visual noise.

Avoid Overloading the Prompt

While clarity is essential, overloading a prompt with excessive detail often leads to worse results. Image to video AI models can become confused when asked to execute too many instructions simultaneously, especially if those instructions conflict or operate at different conceptual levels. Long, unfocused prompts increase randomness rather than precision.

Effective prompts are structured and prioritized. Core motion instructions come first, followed by secondary stylistic or atmospheric details if needed. This hierarchy helps the AI understand what matters most and prevents it from attempting to satisfy every instruction equally.

In professional environments, prompt writing is treated as an iterative craft. Teams refine prompt templates over time, removing unnecessary descriptors and focusing on language that consistently produces usable results. This discipline transforms prompt writing from experimentation into a repeatable production skill.

Common Mistakes in Image to Video AI Use

Treating AI as a One-Click Solution

A common and expensive mistake is treating image-to-video generation like a “generate once and ship” button. When people upload an image, type a vague prompt, and expect a polished, brand-ready clip, they usually get unstable motion, warped details, or strange behavior around edges, faces, hands, small text, and repeating patterns. The reason is simple: the model is not following a deterministic animation plan; it is predicting plausible motion from learned patterns. If the image contains ambiguity—busy backgrounds, unclear separation between subject and environment, inconsistent lighting, or fine details that don’t read cleanly—the AI will “guess,” and those guesses often show up as jitter, melting textures, breathing geometry, or camera moves that feel accidental rather than directed.

Professional teams avoid this by treating AI like an acceleration layer inside a controlled workflow. They start with a clear shot objective (what must stay stable, what can move, what the camera should do, what the emotional tone is), then prepare the image so the model has an unambiguous focal subject and readable depth cues. After that, they iterate intentionally: one change per generation cycle (motion strength, camera type, pacing, or the specific motion phrase) so they can learn what actually improves the output. In other words, they manage AI the way they would manage a fast junior artist—clear brief, constrained options, review, refine—because that’s what turns “randomly impressive” generations into repeatable production.

Ignoring Visual Consistency

Another frequent issue is producing a set of AI videos from images that don’t share a consistent visual baseline. If one image is warm and soft, another is cool and contrasty, and a third has a different perspective or level of detail, image-to-video AI will amplify those differences rather than smooth them out. The result is a series of clips that may look decent individually but feel mismatched when placed together in a campaign, a pitch deck, a store page montage, or a trailer. Viewers notice inconsistency instantly, and in marketing contexts it reduces perceived quality and trust even if the underlying idea is strong.

The fix is to treat consistency as a pre-generation requirement, not a post-generation hope. In a production-minded workflow, teams align images first: similar color balance, comparable lighting direction, consistent framing and camera angle logic, and a similar level of detail. Then they standardize motion behavior by using a stable prompt structure and limiting variation to a small, controlled set of parameters. Instead of rewriting prompts from scratch every time, they build a repeatable template that enforces the same pacing, camera language, and “stability rules” across shots. This is how AI output starts feeling like one cohesive project rather than a collage of unrelated experiments.

Skipping Post-Processing

A third mistake is assuming AI output is “final” and skipping post-production. Even strong generations typically benefit from finishing work: light stabilization to reduce micro-jitter, trimming the start and end to avoid awkward motion ramps, and consistent color correction so the clip fits the rest of a campaign. AI footage often has subtle exposure shifts, inconsistent contrast, or texture shimmer that reads as “synthetic” unless you apply a clean, unified grade. When the content is meant for ads, store pages, or professional presentations, those finishing touches are not optional—they’re what makes the result look deliberate and brand-safe.

Post-processing also solves practical integration problems. If the AI clip is part of a larger sequence with typography, UI overlays, or other footage, you need the pacing and tone to match the edit. Small changes—tightening timing, smoothing motion cadence, matching saturation/contrast, and ensuring stable focal attention—dramatically improve perceived quality. In professional pipelines, AI generation is treated as a source pass, not a deliverable: you generate, select the best take, then finish it so it sits naturally alongside other produced assets. That’s the difference between “AI made this” and “this is polished video content.”

How Image to Video AI Fits Into Professional Production Pipelines

Marketing and Promotional Content

In professional marketing pipelines, image to video AI is most effective when used as a motion amplification layer rather than a replacement for traditional video production. Teams often start with strong static visuals such as key art, promotional illustrations, or campaign-specific images, and then use AI to introduce controlled motion that increases engagement without requiring full animation or live-action shoots. This approach allows marketing departments to generate a larger volume of video content for social media, store pages, and ad placements while maintaining visual consistency with existing brand assets.

Crucially, AI-generated motion is typically constrained to specific use cases: subtle camera movement, atmospheric effects, or gentle subject animation that enhances attention without overwhelming the viewer. Professional teams define clear boundaries for what AI is allowed to do, ensuring that outputs remain brand-safe and predictable. By integrating AI clips into standard editing, grading, and delivery workflows, studios achieve faster turnaround times while preserving the polish and reliability expected from commercial content.

Game Art and Concept Visualization

Within game development pipelines, image to video AI is increasingly used during pre-production and early visualization phases. Concept art, environment paintings, and mood boards can be transformed into animated sequences that communicate atmosphere, scale, and tone far more effectively than static images alone. This helps align internal teams, publishers, and external partners around a shared visual vision before committing to expensive production stages.

AI-generated video also supports rapid iteration in concept development. Art directors can test different moods, camera languages, or environmental dynamics without waiting for full 3D blockouts or animation passes. These animated concepts are not final assets but decision-making tools that reduce uncertainty and speed up approvals. When used this way, image to video AI becomes a bridge between illustration and production, improving clarity while keeping costs and timelines under control.

Hybrid Human-AI Workflows

The most successful professional pipelines treat image to video AI as one component within a hybrid workflow that combines automation with human oversight. AI handles motion generation and variation, while artists and editors retain control over direction, selection, refinement, and final presentation. This division of responsibility ensures that creative intent remains intact while repetitive or exploratory work is accelerated.

In practice, this means AI outputs are reviewed, curated, and refined rather than accepted wholesale. Teams develop internal standards for prompts, motion limits, and acceptable artifacts, and they integrate AI clips into familiar post-production processes such as editing, compositing, and color grading. Over time, this hybrid approach becomes increasingly efficient as prompt libraries, visual guidelines, and iteration patterns mature. The result is a pipeline where AI enhances productivity without compromising quality, consistency, or creative authority.

Strategic Value of Image to Video AI for Studios

Speed and Scalability

One of the most tangible strategic advantages of image to video AI for studios is the ability to scale video output without scaling production complexity at the same rate. Traditional animation and video workflows are linear: more content requires more people, more time, and more coordination. Image to video AI breaks this relationship by allowing teams to generate motion content from existing visual assets, dramatically reducing turnaround time for additional deliverables such as ads, teasers, store visuals, and social media clips.

This scalability is especially valuable in markets where content velocity directly impacts performance, such as mobile games, live-service products, and ongoing marketing campaigns. Studios can respond faster to seasonal events, updates, or market trends without rebuilding pipelines or reallocating core teams. When integrated properly, image to video AI turns static art libraries into reusable motion assets, extending their lifespan and multiplying their output value with minimal friction.

Cost Efficiency Without Creative Compromise

From a strategic budgeting perspective, image to video AI allows studios to optimize costs without sacrificing creative intent. Instead of replacing high-end production, AI is used to offload specific categories of work that are time-consuming but not conceptually complex, such as subtle motion, atmospheric animation, or exploratory visual variations. This lets senior artists, animators, and directors focus on high-impact creative decisions rather than repetitive execution.

Cost efficiency also comes from reducing waste in early-stage production. By using AI-generated video to test ideas quickly, studios can validate creative directions before committing to expensive animation or full production. This reduces the risk of late-stage changes and improves decision quality across the pipeline. Strategically, this means budgets are spent with greater confidence, and production resources are allocated where they create the most value.

Competitive Differentiation and Experimentation

Studios that adopt image to video AI strategically gain an advantage in creative experimentation and differentiation. The ability to explore multiple visual directions quickly enables teams to test concepts that would otherwise be too risky or costly. This flexibility encourages experimentation in marketing language, visual tone, and presentation style, which is increasingly important in crowded and highly competitive markets.

Over time, this experimentation feeds into stronger creative instincts and faster iteration cycles. Studios develop a better understanding of what resonates with their audience because they can test more ideas in less time. Rather than standardizing output, image to video AI supports smarter creative risk-taking, allowing studios to stand out while still operating within controlled, production-safe boundaries. When used intentionally, it becomes not just a tool for efficiency, but a lever for creative and strategic growth.

Final Thoughts on Image to Video AI Production

Image to video AI is not a shortcut around production expertise, but a powerful multiplier when integrated into professional workflows. Its real value lies in the ability to transform existing visual assets into motion-driven content quickly, enabling studios to communicate ideas, market products, and explore creative directions at a pace that traditional pipelines cannot match. However, the quality and usefulness of AI-generated video depend entirely on how well the process is structured, from image preparation and prompt design to iteration discipline and post-production refinement.

Studios that treat image to video AI as a controlled production tool rather than an experimental novelty gain a clear strategic advantage. By combining human art direction with AI-driven motion generation, teams can scale output, reduce risk in early decision-making, and extend the lifespan of their visual assets without sacrificing consistency or brand integrity. In this hybrid model, AI accelerates execution while creative authority remains firmly in human hands.

For studios and publishers looking to integrate image to video AI into marketing, game art visualization, or promotional pipelines at a professional level, working with AAA Game Art Studio provides access to structured AI video workflows guided by experienced art direction. By combining AI-driven production with deep expertise in game art, visual storytelling, and long-term collaboration, the studio helps teams turn static visuals into scalable, controlled, and production-ready video content.

Contacts

Contact Information

Please use contact information below. If you want to send us a message, please use our contact form to the right and we will respond promptly.

Social links: